Wednesday, December 2, 2009

Backup database structure only

Whatever your reasons maybe xSQL Object provides the ideal solution to accomplish this – with a click you can take a snapshot of the SQL Server database schema. The schema snapshot contains all the schema information but no data and consequently is a very small size file. You can then use xSQL Object to compare this schema snapshot to other snapshots or to live databases and see exactly what changed from one schema version to another.

Tuesday, November 24, 2009

T-SQL: set end date to first or fifteenth of the month

I am sure there are many ways to do this but here is one:

DECLARE @sDate DATETIME -- subscription date

DECLARE @sYears tinyint -- number of years the subscription will last

SET @sDate = getdate()

SET @sYears = 1

IF datepart(dd, @sDate) <= 15

SELECT DATEADD(year, @sYears, @sDate) - datepart(dd, @sDate) + 15

ELSE

SELECT DATEADD(year, @sYears, DATEADD(mm, DATEDIFF(m,0,@sDate)+1,0))

Script Executor - package t-sql scripts into executables

An executable package in the context of the Script Executor is a small, dynamically generated executable file that contains embedded in it scripts, list of databases against which the scripts will be executed, and a small piece of code to run these scripts. You can run it as you would run any other Windows program.

Executable packages are suited for deploying Sql script to the end-users/clients. A user can run it without having Script Executor, or SSMS installed.

Monday, November 23, 2009

SQL Server execute scripts - multiple scripts, multiple databases

No more! You can now use Script Executor to take care of this job for you. One time only, you will go through a simple, and may I suggest fun, process of organizing your scripts into virtual containers and your databases into groups; then, map those script containers to the database groups; set an order of precedence if you need to and voila you have a package that you can now execute with a single click; or, you can even schedule it to run before you even make it to the office.

Script Executor will execute each script in the order you have defined against each database based on the mappings you have defined and once completed it will generate a comprehensive report allowing you to see how the script execution went on each target and also easily “flip through” the result sets that those scripts may generate.

Deploying (executing) multiple scripts against multiple SQL Server databases has never been easier.

You can download a free trial version of Script Executor from: http://www.xsql.com/download/script_executor/

A free community edition of Script Executor with limited functionality is also available: http://www.xsql.com/download/script_executor/

Applies to:

- SQL Server execute t-sql scripts

- SQL Server execute multiple scripts

- SQL Server deploy scripts to multiple databases

- SQL Server package t-sql scripts

- SQL Server run multiple scripts

Tuesday, November 17, 2009

Kindle, Zune, iPod - a free gift with every purchase

Friday, October 16, 2009

Is Bing beating Yahoo already?

The poll has been closed - the final result was 60% for Bing and 40% for Yahoo however, the number of responses we got was statistically insignificant so I would put no stock on these results.

Friday, October 2, 2009

$100 for your SQL puzzle

If your puzzle is chosen to be published on our site we will:

- Send you a $100

- Link from the puzzle page to your blog or your site

The harder your puzzle the higher the chances that it will be picked, however,

The puzzles should fall into one or more of the following categories: SQL Server, T-SQL, Database Management Systems

Submit your puzzle here: sales@xsql.com

Thursday, September 17, 2009

To pay or not to pay this is the question

Knowing how important those reviews are we have sent tenths of product review requests in the last 6 years and in the process we have learned the unsettling truth about product reviews: they don’t come by easily. In the case of the big guys (technical magazines and large user group sites) you don’t have to pay for the product review per-say but your product will forever be in the queue unless you are shelling out a few thousand advertising dollars in those medias, only then does your product magically shoot up to the top of the product review queue; whereas in the case of the smaller guys (bloggers and user group contributors) you often have to pay for the review anywhere from a set price of as low as $200 for one product review to a per word price that can be as high as $0.6 per word (this is the highest product review offer we have received).

In the case of the big guys since you are not paying directly for the product review there is no moral dilemma – deep down you know that the review can’t possibly be fair and un-biased. If you are paying $30K / year for advertisements on that media the last thing they would want to do is alienate you with a bad product review, but, it’s easy to shun those thoughts, after all this is your product and you truly believe that it is a great product.

On the other hand, when it comes to paying for the review directly it feels like cheating and unfair to the reader who believes that he is reading an impartial review. For it to feel right one must disclose in big bold letters that “this review has been paid for by the product vendor…”, but such disclosure would likely deem the review worthless. Ideally you would want someone to review your product simply because they like the product and they want to share it with other people but in all fairness reviewing a software product and then writing up your observations and conclusions may take hours of work of which we humans have a very limited supply of. Herein lies our dilemma – to pay or not to pay?

Until now we have chosen not to pay, instead we have only offered the reviewers a free license for the product they decide to review which we think is fair and does not present a conflict of interest. We would certainly be more comfortable continuing with this approach but the results are not very promising hence our pondering.

Let us know what you think – would you pay?

And of course, we would be delighted if you would consider reviewing any of our fine products in exchange for a free license.

Wednesday, September 2, 2009

Synchronize two SQL Server databases

Is this time for panic? No it is not, it is time for xSQL Object – in just a few clicks it will show you exactly where the differences are on both sides and better yet auto-generate the scripts that you need to quickly and safely make the changes.

Download it now and eliminate “the pain” – free lite edition with no strings attached. Supports SQL Server 2000, SQL Server 2005 and SQL Server 2008.

Friday, August 28, 2009

Best way to execute t-sql scripts

Friday, July 31, 2009

New build of xSQL Bundle available

The new build adds the ability to include and exclude object level permissions from the synchronization. Permissions are now shown under each object they're granted to or denied on. Statement permissions are listed under the database node in the comparison grid.

Monday, May 25, 2009

xSQL Builder now supports SQL Server 2008

No more sending T-Sql scripts to your clients - send them an executable instead!

Download your free trial of xSQL Builder now: http://www.xsql.com/download/sql_database_deployment_builder/

Wednesday, May 6, 2009

Right tool for the job - what our customers say

http://catholicinformation.aquinasandmore.com/2009/05/06/using-the-right-tools-for-the-job/ - it is in such cases that we can proudly say: you don't have to listen to us - see what others are saying...

Thursday, March 26, 2009

Execute Sql Scripts on DB2

You can use Script Executor to execute scripts against DB2 9.0 databases.

You can read more and download your trial copy of Script Executor from: http://www.xsql.com/products/script_executor/

Wednesday, March 25, 2009

Script Executor now supports DB2 and SQL Server Compact

Script Executor provides for deploying Sql Scripts to multiple target databases with a click of a button and allows you to:

- organize target databases into logical groups

- organize your Sql Scripts into containers and order scripts within each container

- map script containers to database groups

- order mappings and set precedence constraints

- get a comprehensive execution report

- view execution results in one integrated easy to navigate interface

Download your trial copy today from: http://www.xsql.com/download/script_executor/

Monday, March 9, 2009

Independent consultants can quickly translate hours saved to dollars - employees can't

So, that got me thinking: why would someone readily pay a few hundred dollars from his own pocket for one of our tools while a full time employee seems to struggle to make up his mind and appears to be constantly looking to come up with unusual scenarios that the off the shelf tool may not handle? I have come up with three main reasons (not necessarily an exhaustive list of reasons):

- the value preposition for the independent consultant is very clear – every hour of their time has a price tag – if they find a tool that saves them 1 hour per day they can quickly translate that saved hour into dollars. On the other hand a full time employee can not easily convert “hours saved” to money, nor can his boss or the boss’ boss.

- The bureaucracy that plagues many organizations significantly dilutes the short term value of such tools - if you as an employee are forced to go through hoops to get approval for purchasing a $300 product that will potentially save you a couple of hours per week then you may decide it is not worth the pain. The independent consultant on the other hand does not need to ask for permission from anyone so there are no “costs” associated with the approval process.

- A programmers ego: “I can do this stuff myself, why should I pay someone else to do it for me” When it comes to productivity an independent consultant can not afford to have an ego whereas a full time employee can and furthermore, by building a custom solution instead of buying an off the shelf tool the full time programmer will likely consolidate his position with the company. A lot of you may be surprised but I have found that it is often easier to convince the higher ups to let you put 100 hours on a project than convince them to let you spend $300 on a tool.

Please note that this is not a “research based” conclusion – it is simply an opinion supported by personal experiences. Please feel free to add your perspective on this and don't forget to check out our tools - you don't even have to pay anything in some cases.

Friday, March 6, 2009

A real life Sql scripts deployment story

For the last few months he was using the free community edition of our Script Executor and it had helped him a lot but it wasn't exactly what he wanted whereas the new Script Executor 3 gave him exactly what he needed - he spent about 15 minutes configuring his Sql script deployment package and saved the project. Now, all he needs to do is click Execute and a task that once took hours takes him one minute to launch and about 10 - 15 minutes to review the results.

His words: "I would have gladly paid the $179 from my pocket if the company would not have approved the purchase. Thank you for an awesome product!"

Well, thank you! For us there is nothing better than knowing that our work is providing real value to others.

Wednesday, March 4, 2009

Execution order and precedence

This cool feature allows the user to configure highly complex deployment scenarios involving dependencies between scripts and groups of scripts. The picture below shows where the Execution order and precedence constraint can be set for a given mapping.

Friday, February 27, 2009

The 7 countries that have “lost the number” of our site!

Every time I look at the World map on google analytics, that cool map that shows where the visitors came from, I see a handful of white spots – that is countries from which we did not have any visitors in the last 30 days. I am always curious to know which ones those countries are but they are usually on the same general area so I don’t often drill down. Today it occurred to me that it would be interesting to go back in time and see if there were any countries from which no one ever visited our website – I set the range from January 1, 2008 to today and found that in those 14 months we have not had a single visitor from 7 countries out of 200 and some countries. And the “winners” are:

From Africa

- Burkina Faso

- Central African Republic

- Madagascar

- Mauritania

- Western Sahara

From Asia:

- Kyrgyzstan

- Turkmenistan

Please do keep in mind that our website: http://www.xsql.com is a niche site providing database tools to database administrators and software developers and therefore, this “finding” does not necessarily mean anything. I just found this interesting and decided to share with ya’ll!

Executing multiple Sql Scripts

Well, you don't have to go through that annoying process anymore - with Script Executor, in just minutes, you can configure a highly complex scenario involving hundreds of scripts and tenths of target servers after which your highly complex deployment will require just one click or even none if you use the command line utility.

Download Script Executor now and see for yourself why we are so excited about it!

Wednesday, February 25, 2009

Where do MySQL users congregate?

We are kind of new to the MySQL world and are trying to figure out what are THE "places" to go if you want to reach MySQL users. Our tools are primarily SQL Server tools but we have just released a new tool, Script Executor that supports MySQL as well. The tool in a nutshell allows the user to execute Sql Scripts against multiple MySQL databases – it is a great tool for any database administrator or developer that works with MySQL. We are looking for two things:

- users who would be willing to review the product

- web destinations where we should advertise

So, if you are either someone interested in reviewing Script Executor or someone who knows where we should advertise please email us at our info address on our xsqlsoftware.com domain or simply put a comment here.

Tuesday, February 24, 2009

The Script Executor you have been waiting for

The previos version of Script Executor has been renamed to "Script Executor Community Edition" and will continue to be available free of charge from our website.

Check the new Script Executor out - you will be impressed!

Download your free trial now: http://www.xsql.com/download/script_executor/

Wednesday, February 4, 2009

Best way to compare data in two SQL Server databases

So you need to compare the data in two SQL Server databases – maybe just the data in certain tables, maybe certain rows only and maybe you are just interested in certain columns. And what if the tables you need to compare don’t have a primary key defined (well, they all should but it is not rare to see cases when they don’t) how can you approach the task? A quick search on google will reveal an abundance of tools all claiming to do what you need so how do you know which one to pick?

We have made the task simple for you. We have tested every single one of those tools and the bad news is that with the majority of them you would be wasting your time. There are only very few that do the job right and we are happy and proud to say that xSQL Data Compare is the best tool you will find in terms of breadth of functionality it provides, flexibility it gives the user, performance with large databases and last but not lease cost. No, you don’t have to take our word for it – we have thousands of very happy users the majority of which have not had to pay a dime for it. You see, xSQL Data Compare is completely free for SQL Server Express with no restrictions and it is also free for other editions of SQL Server as long as the databases being compared don’t have more than a certain number of objects.

Here are some highlights of xSQL Data Compare:

- You can compare the data in two sql server databases or just select tables in those databases;

- You can map tables to be compared any way you want regardless of the table names;

- If xSQL Data Compare can’t find a predefined unique key on a table it allows you to define it on the fly as a combination of one ore more columns in that table;

- You can set filters on each table if you wish to compare only a subset of the rows;

- You can include or exclude columns from the comparison;

- You can choose to use bulk insert for the synchronization if you expect that a large number of rows will need to be inserted in the target table.

xSQL Data Compare supports SQL Server 2008, SQL Server 2005 and SQL Server 2000.

You can download your copy from: http://www.xsql.com/download/sql_server_data_compare/

Tuesday, February 3, 2009

Documenting your databases – one tool does it all

Here is a perfect excuse that I have heard way too often: “by the time we are done documenting things have already changed so the documentation becomes outdated before it is even completed”. That might have been true in the distant past but not any more. With the right tool you can document your databases like SQL Server, DB2, Oracle, MySQL, Informix virtually any platform, in minutes – you can even create a job that automatically updates the documentation every day. One of the best tools out there to do the job is xSQL Documenter which allows you to simultaneously generate compiled CHM and / or HTML documentation for all your databases.

What’s even better, a great tool like this does not cost a fortune – for less than $200 you will get an incredible tool that will save your organization hundreds of hours of work.

xSQL Documenter supports the following platforms: SQL Server, Oracle, DB2, MySQL, Informix IDS, Sybase ASE, Sybase SQL Anywhere, PostgreSQL, Access, VistaDB, ENEA Polyhedra;

You can download your copy of xSQL Documenter from http://www.xsql.com/download/database_documenter/

Thursday, January 29, 2009

What’s the interest on the $819B stimulus bill?

So, what does this have to do with SQL Server tools? Nothing really other than these numbers are so amazingly big that it is hard to just ignore them and focus on our job which is to build awesome tools for SQL Server database administrators and developers.

Wednesday, January 28, 2009

Another presentation on RSS Reporter

Monday, January 19, 2009

Deploying database schema changes to hundreds of clients

So, what do you do? Well, you use xSQL Builder which was designed to alleviate this kind of trouble. xSQL Builder is extremely easy to use but at the same time, if your scenario is more complicated it gives you all the flexibility to customize it to best fit your needs. How does it work? You go through a simple wizard that takes no more than a couple of minutes and allows you to choose the source or “master” database which you want to deploy to your customers, set a few parameters and generate an executable package that you can then send to your clients. The client runs the executable which compares the embedded schema of the source/master database with the client’s version of the database, generates a synchronization script, executes the script and emails you the results. Now, you have to admit that is a cool and extremely efficient way to deploy your database schema changes.

You can download your copy of xSQL Builder from: http://www.xsql.com/download/sql_database_deployment_builder/

Thursday, January 15, 2009

Wrap your insert, update and delete statements on a transaction – ALWAYS

Well, we are humans and as such we are prone to making mistakes, sometimes, big and costly mistakes so, wouldn’t it be nice to “erase” our mistakes when we realize that? That’s what a simple Begin Transaction statement provides us – the ability to rollback our mistakes. How does one realize that he made a mistake – the first indicator is the “number of rows effected” which SQL Server reports – if that number is larger than you expected then maybe you made a mistake on your statement. How else can I tell? The cool thing is that SQL Server will let you read the uncommitted rows and see what your statement did - so you can issue a select and review the rows you just updated for example. If you do realize that you made a mistake you simply issue a rollback and all is good – it is like it never happened. If you are sure your statement did what you intended it to do then you issue a commit transaction and the changes you just made become permanent.

So again, be diligent and wrap every update, delete or insert statement in a begin transaction / rollback / commit transaction. It is much better to be safe then sorry!

Applies to: SQL Server, SQL Server Mangement Studio, Query Analyzer

Wednesday, January 14, 2009

SQL Server Query Governor way off on its estimates!

So, here is the scenario: as a diligent DBA you audit a SQL Server you just took over and you realize that the query governor cost limit is set to 0 which means in essence that as far as the query governor is concerned any queries can run on the server for as long as they need to run. That’s not good so you go ahead and set a high limit to start with, let’s say 300 seconds. Your thinking is that if a query that is estimated to run for more than 5 minutes ever comes across it should be stopped dead on its tracks before hundreds of calls from angry users reach your desk. To your surprise 5 minutes after you have set that limit you get a call from Jane Doe – she has seen this strange message on her screen she never saw before “you can’t execute this query because it is estimated to take 450 seconds”. Jane assures you that the report she is running usually runs in 5 to 10 seconds!

You go ahead and raise the limit to 500 seconds – Jane runs the report and sure enough the report takes less than 6 seconds! Something is not right – why is the query optimizer so grossly overestimating the time this query will take to run?

The answer is simple indeed – remember the query optimizer is estimating - estimates are made based on available information and if the available information is either missing or not accurate than the estimate won’t be accurate. The information that the query optimizer uses is in the statistics that SQL Server maintains and that is where you need to look. So, check it Auto Create Statistics is on first – if not no then you either need to turn it on or manually create the statistics the optimizer needs for estimating this query (this is the case when the necessary statistics information may be missing). Second check if Auto Update Statistics is on – if not you may need to update the stats to enable the query optimizer to produce a more accurate estimate.

Furthermore, you may find that all is in order that is no statistics are missing and the stats are up to date and yet the estimate is wrong! Try forcing a recompile of the query in question and see what happens. What may have happened is that SQL Server is using a cashed plan that was optimized for the “wrong” parameter values – that is values which are not good representatives of the dataset and based on that plan it is estimating that 10,000 rows will be returned from table a when in fact in 90% of the cases the number of rows returned from table a on that query is under 1000. By forcing a recompile of the query you are forcing SQL Server to re-evaluate and possibly pick a better plan this time based on new parameter values.

The objective of using the query governor cost option is to prevent “stupid” queries from degrading the system performance and not to prevent legitimate queries from running. So you may be forced to raise the limit but you should look at that as a temporary measure until you have dealt with the legitimate queries by taking the above mentioned actions as well as optimizing the query itself. Then, you should go back and start gradually lowering the query governor cost limit.

Monday, January 12, 2009

Why is SQL Server scanning the clustered index when I have an index on the column I am filtering on?

The answer lies in the fact that the SQL Server Query Optimizer is smarter than that (albeit not as smart as I wish it would be). You see, when deciding on a particular execution plan the Query Optimizer has to use an actual value for the parameter x to estimate the number of rows that will be returned on each step. It further assumes that the rows you are looking for are randomly distributed and that it will need to do a page read for every row being returned in addition of the reads it needs to do on the index pages. Depending on this number it makes a determination whether it will be more efficient to just scan the whole table and pull out the rows it needs or go to the col_n index first to get a list of addresses and then go and pull those rows.

So, now that we know why should we just leave it there since it appears that SQL Server is correctly picking the most efficient path? Not so fast! Remember all that evaluation is being done based on a certain value of parameter x. It could be that for most of the values of parameter x scanning the table is more efficient. In that case you can simply drop the index on col_n if it is not needed for any other queries and leave it at that. However, it could be that for 99% of the possible values of x it would be a lot more efficient to utilize the col_n index – it just so happened that unfortunately when generating the plan the value of x happened to fall on that 1% for which scanning is more efficient. In this case you have to options:

- simply force SQL Server to dispose the bad plan that it has cashed and generate a new one; OR

- use a HINT to force SQL Server to use the index. The danger with this however is that with time as the data changes the use of that index may not be optimal so I would recommend that you avoid using HINTS whenever possible and let SQL Server do its job.

One last note: it is often not necessary to return all the columns – in other words instead of SELECT * FROM table1 WHERE col_n = x you may only need to return a couple of columns like SELECT col_1, col_2 FROM table1 WHERE col_n = x in which case it could be worth it to include col_1 and col_2 in the col_n index. That way SQL Server would not need to go to the table at all but instead get all it needs from the index pages. In certain scenarios where you have a rarely updated but very frequently queried table the above approach of including other columns in the col_n index may make sense even if the query returns all the columns.

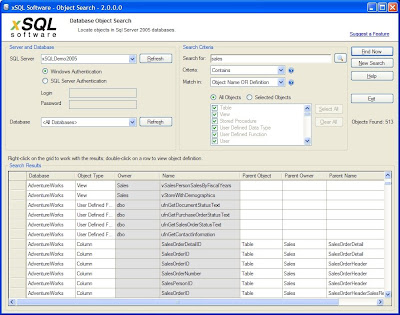

SQL Server search stored procedures

The good news is: you don’t have to struggle with this when there is a really cool and completely free tool called xSQL Object Search available. So how does xSQL Object Search work? Very simple:

- You connect to the server you are interested on

- Choose to search in a certain database or All Databases on that server

- Define your search criteria

- What are you looking for

- How do you want to search (exact match, contains, starts with, ends with, sql server expression, regular expression)

- Choose where do you want to look - the object name, object definition, or both?

- Finally choose which object types you wish to search (all objects or just certain types of objects like stored procedures, views, tables, triggers etc.)

- Click on Find Now (see the screen shot below

xSQL Object Search is a free tool and you can download it from: http://www.xsql.com/download/sql_server_object_search/

Applies to:

SQL Server search tables

SQL Server search views

SQL Server search stored procedures

SQL Server search triggers

SQL Server search functions

Tuesday, January 6, 2009

Free SQL data compare tool

The xSQL Data Compare licensing works exactly the same way as the licensing of xSQL Object described in detail on yesterday’s post here.

Monday, January 5, 2009

Free SQL Compare tools for SQL Server

- everyone downloads the same xSQL Comparison Bundle package that includes xSQL Object and xSQL Data Compare;

- for the first two weeks the applications function as if you had purchased a professional license;

- after the first two weeks the licensing module first checks the edition of the databases you are comparing:

- if both databases are SQL Server Express edition then you see no difference – works the same as before;

- if one of the databases in the comparison is something other than SQL Server Express then the licensing module checks the number of objects in that database (number of tables, stored procedures, views, functions)

- if the number of objects is within the limits of what we call the Lite Edition then again the application works and you don’t see any difference;

- if the number of objects is greater than those limits then you are kindly notified that to compare and sync that database you need to acquire a license. Well, someone has to pay for all that development effort that goes into our products :)